RMI

Remote Media Immersion

RMI Applications

Immersed in a live college football game

Doctors performing a remote procedure perceiving the fine details of the surgery

Business people feeling as if they are in the same room

Students immersed in an aquarium environment a thousand miles away

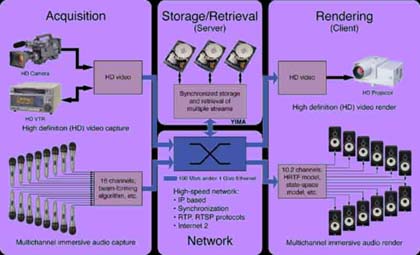

A landmark event in the evolution of Internet applications, Remote Media Immersion (RMI) demonstrates a breakthrough Internet technology for capturing, streaming, and rendering high-resolution, big-screen digital video and multichannel audio. It transforms the Internet from a low-fidelity medium for browsing information to a high-fidelity medium delivering a rich experience beyond any home medium in existance today.

RMI uses a commercial high-speed Internet link to transmit multiple streams of picture and sound across the United States from an IMSC dynamic media server that deliveres on-demand high resolution video and multichannel audio content, which dramatically surpasses the quality of today's high-definition broadcast television.

• NSF Report

• Poster

RMI is a test bed integrating many of the technologies that are the result of IMSC's various research efforts with innovations in several major technical areas:

Yima Media Server

Real-time Digital Storage and Playback of Multiple Independent Streams. The many different streams of video and audio data are stored and played back from the Yima system developed at IMSC. The goal of the Yima project is to design and develop an end-to-end architecture for real-time storage and playback of several high quality digital media streams through heterogeneous, scalable and distributed servers utilizing shared IP networks (e.g., Internet). The system consists of 1) a scalable, high performance, and real-time media server, 2) a real-time network streaming paradigm and 3) several video and audio clients.

Real-time Digital Storage and Playback of Multiple Independent Streams. The many different streams of video and audio data are stored and played back from the Yima system developed at IMSC. The goal of the Yima project is to design and develop an end-to-end architecture for real-time storage and playback of several high quality digital media streams through heterogeneous, scalable and distributed servers utilizing shared IP networks (e.g., Internet). The system consists of 1) a scalable, high performance, and real-time media server, 2) a real-time network streaming paradigm and 3) several video and audio clients.

On the server side, the Yima architecture combines multiple commodity PCs into a server cluster to scale as demand grows. We currently use 933 MHz Pentium III computers running Linux, each with a 3Com 3C996B-T Gigabit Ethernet interface and a Seagate 73 GB Ultra160 SCSI LVD Cheetah disk for data storage. The client systems communicate with the server through the industry standard RTP and RTSP protocols that have been extended with our own buffer management feedback control mechanism and synchronization facilities. The Yima clients can render many different digital media flavors, e.g., from low bitrate MPEG-4 to very high bitrate MPEG-2 with multi-channel audio. Specifically, the RMI client is a Linux based PC that uses the RME 9652 "Hammerfall" multi-channel sound card to provide up to 16 (or 24) channels of uncompressed PCM audio for immersive sound. High definition MPEG-2 video decoding is achieved via a Vela Research CineCast HD board interfacing through our own Linux drivers. Additionally, the client software synchronizes the audio and video streams to provide frame accurate rendering.

Yima distinguishes itself from other similar efforts due to its: 1) independence from media types, 2) frame/sample accurate inter-stream synchronization, 3) compliance with industry standards (e.g., RTSP, RTP, MP4), 4) a selective retransmission protocol, 5) a scalable design, and 6) multi-threshold buffering to support variable-bitrate media (e.g., MPEG-2, MPEG-4, DivX).

Immersive Audio

Immersive audio is a set of signal processing methods developed at IMSC that are used to accurately capture and render sound for multiple listeners. It includes methods for capturing the acoustics of the space and the directionality of individual sounds, algorithms for synthesizing multichannel sound from a few original signals (virtual microphones), techniques for measuring the listening characteristics and subjective preferences of listeners, methods for rendering sound using multiple loudspeakers and algorithms for removing the distortions introduced by the acoustics of the playback environment. The combination of these technologies is used to create a seamless sonic environment for a group of listeners.

Immersive audio is a set of signal processing methods developed at IMSC that are used to accurately capture and render sound for multiple listeners. It includes methods for capturing the acoustics of the space and the directionality of individual sounds, algorithms for synthesizing multichannel sound from a few original signals (virtual microphones), techniques for measuring the listening characteristics and subjective preferences of listeners, methods for rendering sound using multiple loudspeakers and algorithms for removing the distortions introduced by the acoustics of the playback environment. The combination of these technologies is used to create a seamless sonic environment for a group of listeners.

Error Correction

The RMI video is transmitted at rate of 45 Megabits per second (Mb/s), faster than the 20 Mb/s rate for broadcast HDTV. In general, increasing the data transmission rate improves the picture quality and realism. It also reduces the overall delay in transmitting the information through a network by simplifying the video compression and other signal processing required. Reducing delay is particularly important for two-way (interactive) applications of RMI. RMI technology has the potential to increase the data transmission rate to more than 1000 Mb/s (1 Gigabit per second), offering the potential for even better video (larger screens, panoramic or hemispherical displays, and increased resolution).

The RMI video is transmitted at rate of 45 Megabits per second (Mb/s), faster than the 20 Mb/s rate for broadcast HDTV. In general, increasing the data transmission rate improves the picture quality and realism. It also reduces the overall delay in transmitting the information through a network by simplifying the video compression and other signal processing required. Reducing delay is particularly important for two-way (interactive) applications of RMI. RMI technology has the potential to increase the data transmission rate to more than 1000 Mb/s (1 Gigabit per second), offering the potential for even better video (larger screens, panoramic or hemispherical displays, and increased resolution).

We have performed experiments across both LAN and WAN environments. Our most recent tests were conducted via a transcontinental SUPERNET link from the Information Science Institute (ISI East) at Arlington, VA, to the USC campus in Los Angeles, CA. See also the SUPERNET Next Generation Internet (NGI) Experiments web site.

Synchronization

The RMI system is capable of transmitting data from multiple distributed servers over local area and wide-area shared networks to many destinations simultaneously. One of the biggest challenges is to minimize the transmission delay, avoid any loss of data that could lead to hiccups or stuttering in the displayed audio and video, and ensuring synchronization of all the streams when finally rendered at the client site. These issues are referred to as quality of service (QoS), and are very important factors in the design of the RMI system. Our continuing work involves the optimization of procedures to ensure QoS for general immersive technology applications over many different types of networks.

The RMI system is capable of transmitting data from multiple distributed servers over local area and wide-area shared networks to many destinations simultaneously. One of the biggest challenges is to minimize the transmission delay, avoid any loss of data that could lead to hiccups or stuttering in the displayed audio and video, and ensuring synchronization of all the streams when finally rendered at the client site. These issues are referred to as quality of service (QoS), and are very important factors in the design of the RMI system. Our continuing work involves the optimization of procedures to ensure QoS for general immersive technology applications over many different types of networks.